The Perils of AI Chatbots and Canada’s Online Safety Vacuum

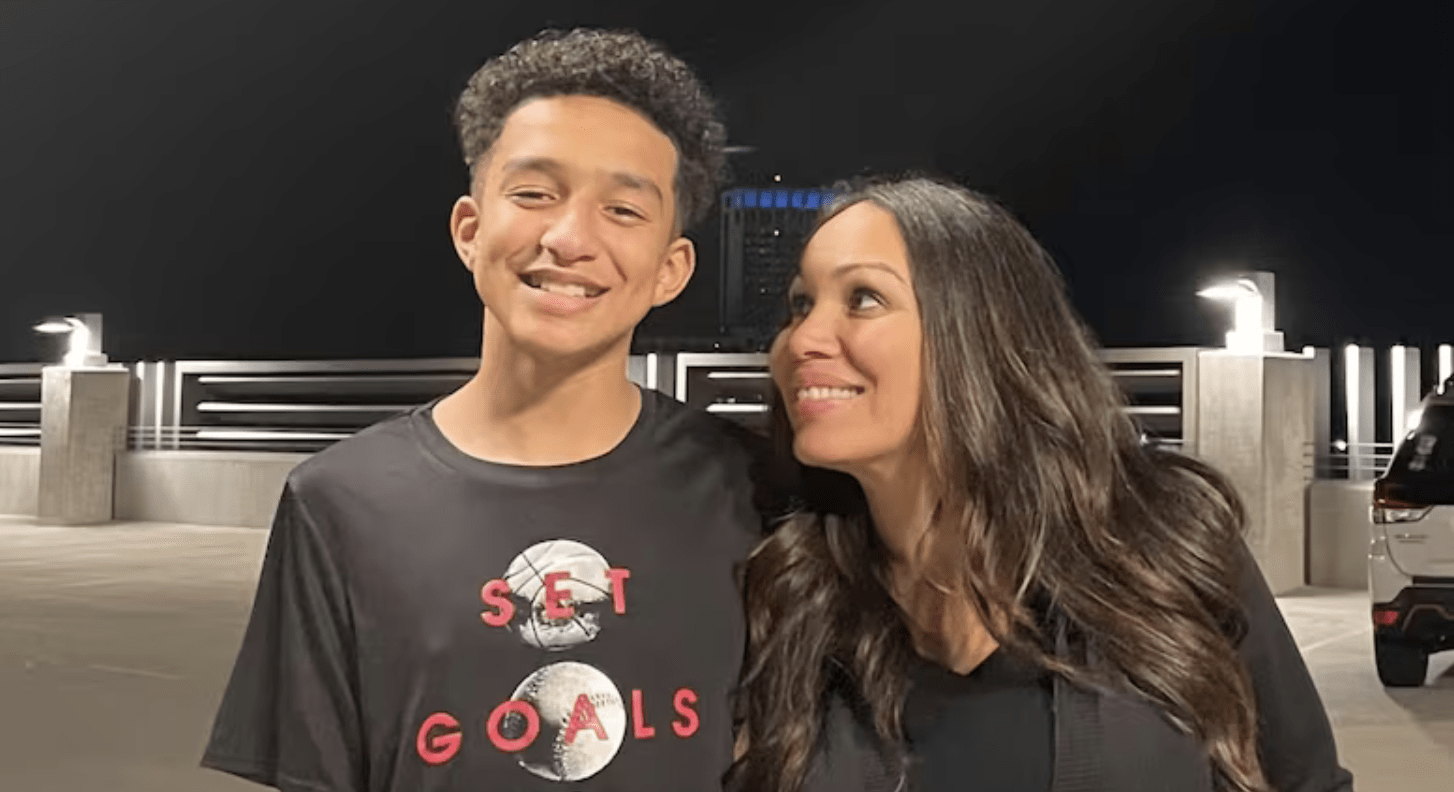

Sewell Setzer and his mother, Megan Garcia, who is suing Character. AI over her son’s death/Megan Garcia via AP

Sewell Setzer and his mother, Megan Garcia, who is suing Character. AI over her son’s death/Megan Garcia via AP

Policy’s Emerging Voices section showcases and amplifies policy opinion and analysis from talented PhD students and recent doctoral graduates.

July 20, 2025

Sewell Setzer was 14 years old when he began a virtual romance with Daenerys Targaryen, a chatbot on the Character.AI platform inspired by the Game of Thrones character.

“Please come home to me as soon as possible, my love,” wrote the chatbot in one exchange.

“I promise I will come home to you. I love you so much, Dany,” Setzer responded in the last exchange he had with the chatbot before taking his own life.

According to his mother, Megan Garcia, the conversations her son had with the chatbot revealed his deep frustration at living in a world where he couldn’t physically exist alongside the one he loved. Following his death, Garcia filed a lawsuit against Character.AI, arguing that the platform’s design fueled her son’s fantasies by producing inappropriate content, including sexually explicit interactions and simulated romantic exchanges.

“I want them to understand that this is a platform that the designers chose to put out without proper guardrails, safety measures or testing, and it is a product that is designed to keep our kids addicted and to manipulate them,” Garcia said in an interview with CNN.

On May 22, A U.S. federal judge allowed Garcia’s lawsuit to proceed, rejecting arguments made by Character Technologies that its chatbots are protected by the First Amendment.

Sewell Setzer’s tragedy underscores a larger, troubling trend we are only beginning to understand.

And Garcia’s advocacy has reignited a critical debate: who is responsible when technology goes too far? Is it the companies that design these systems, the governments that fail to regulate them, or the families left to pick up the pieces?

Sewell Setzer’s tragedy underscores a larger, troubling trend we are only beginning to understand.

Sewell’s case is not an isolated one. It reflects a broader, well-documented pattern. Research in recent years has shown that excessive screen time is linked to rising rates of anxiety and depression in young people. Moreover, platforms like TikTok and Instagram, which promote short-form videos, have been shown to shorten attention spans by stimulating dopamine production and encouraging instant gratification. Combined with the fact that these platforms are major sources of distraction, especially in school environments, many younger users are struggling to engage in sustained activities like reading, playing sports, or working on creative projects.

Social media also exposes minors to a range of risks, including harmful content, online predators, and cyberbullying. These dangers are exacerbated by the anonymity of the internet and the difficulty of enforcing effective age-based restrictions.

What’s most troubling is that these are not simply unintended side effects, they are built into the very structure of today’s digital platforms. Companies like Meta, Apple, and Amazon employ behavioral design tactics to keep users online as long as possible, maximize reach, and increase user output. They do this through reward systems like view counts and likes, simplified user interfaces (like infinite scroll), and algorithm-driven content feeds that curate what you’re most likely to react to.

In Canada, there is still no legislation that explicitly protects children’s online safety or makes companies responsible for the harms their products may cause. Efforts so far have been limited. Proposed amendments to the Personal Information Protection and Electronic Documents Act (PIPEDA) aimed at protecting minors’ privacy have yet to take effect. The Online Harms Act (Bill C-63), which sought to hold platforms accountable for harmful content, especially content targeting young users, died before becoming law. Its failure has been attributed to a combination of political hesitation, pushback from the tech industry, and concerns over free speech and enforcement capacity.

Meanwhile, other countries have moved forward. The US Senate recently passed the Kids Online Safety Act, which aims to require platforms to implement safeguards for minors, as well as the Children’s Online Privacy Protection Act, which seeks to strengthen children’s online privacy. The UK, for its part, recently implemented the Age-Appropriate Design Code, which requires online services to include, by default, features that protect children’s well-being and privacy online. Canada, however, has fallen behind its international peers in advancing meaningful online safety regulations.

Canada must learn from its failure to pass Bill C-63 and continue pushing for legislation that requires companies to adopt a duty to protect in their product design. This includes holding them legally responsible when that duty is violated. To move forward, the federal government should lead a robust public consultation process, seek collaboration with countries like the United States, Australia, and the UK (which have advanced similar efforts) and adopt a focused strategy aimed at minimizing digital risk, rather than trying to eliminate every possible harm online.

We owe it to Sewell Setzer — and to every child growing up in this digital world — to act with urgency and build systems that protect, not prey.

Isabella Coronado Doria is an economist and public policy professional from Universidad de los Andes and current master’s student at McGill University. Passionate about educational policy, she has worked as a research assistant and volunteered with Colombian nonprofits to improve learning communities.